Quantum Computing: The Revolutionary Technology

Imagine a world where we can discover life-saving medications in months instead of years, predict weather patterns with unprecedented accuracy, and solve complex optimization problems that would take today's supercomputers millennia to crack. This isn't science fiction—it's the promise of quantum computing, a transformative technology that's moving from research labs to real-world applications faster than most Americans realize. As we navigate through 2025, quantum computing stands at an inflection point, ready to revolutionize industries from healthcare to finance, cybersecurity to climate science.

The race for quantum supremacy has captured the imagination of tech giants and startups alike, with companies like IBM, Google, and Microsoft investing billions into quantum research and development. For the average American, understanding quantum computing is no longer optional—it's becoming essential as this technology begins to impact our daily lives, from the security of our online banking to the medications our doctors prescribe. This comprehensive guide will demystify quantum computing, exploring how it works, why it matters, and what it means for our collective future.

Understanding the Fundamentals: What Makes Quantum Computing Different

At its core, quantum computing represents a radical departure from the classical computing paradigm that has powered everything from smartphones to supercomputers for decades. Traditional computers, no matter how powerful, process information using bits—tiny switches that can be either 0 or 1, on or off. Every calculation your laptop performs, every video you stream, every message you send relies on these binary building blocks working in combination.

Quantum computers, however, operate on fundamentally different principles borrowed from quantum mechanics, the branch of physics governing the behavior of matter and energy at the atomic and subatomic levels. Instead of classical bits, quantum computers use quantum bits, or qubits, which can exist in multiple states simultaneously thanks to a phenomenon called superposition. This means a qubit can represent 0, 1, or any quantum superposition of these states at the same time, allowing quantum computers to process vastly more information than their classical counterparts.

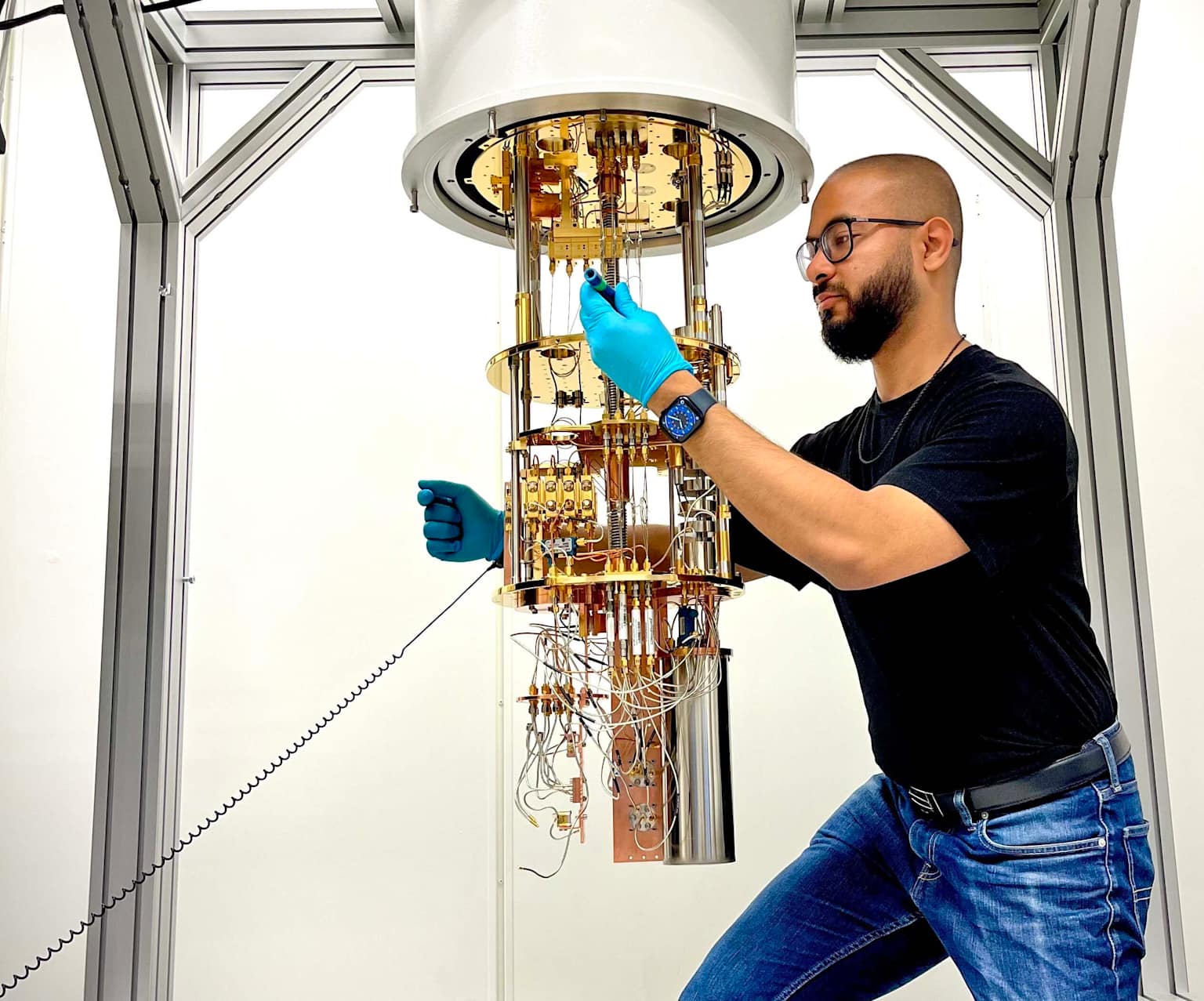

Technician working on a quantum computer dilution refrigerator in a laboratory setting

To understand just how revolutionary this capability is, consider a simple comparison: while a classical computer with 16 bits can only represent one of 65,536 possible states at any given moment, a quantum computer with just 16 qubits can represent all 65,536 states simultaneously. This exponential scaling is what makes quantum computing so powerful. As you add more qubits, the computational power doesn't just increase linearly—it doubles with each additional qubit, creating an exponential growth in processing capability.

The mathematics behind this phenomenon is straightforward yet profound. For any quantum system with N qubits, the number of possible states that can be represented simultaneously is 2^N. This means that while a classical computer with 50 bits can handle 50 calculations at once, a quantum computer with 50 qubits can theoretically process over one quadrillion (1,125,899,906,842,624) states simultaneously. This exponential advantage is what researchers call quantum parallelism, and it's the secret sauce that could allow quantum computers to solve certain problems exponentially faster than any classical machine ever could.

The Quantum Mechanics Behind the Magic: Superposition, Entanglement, and Interference

Three core quantum mechanical principles power quantum computing: superposition, entanglement, and interference. Understanding these concepts is key to grasping both the potential and limitations of quantum technology.

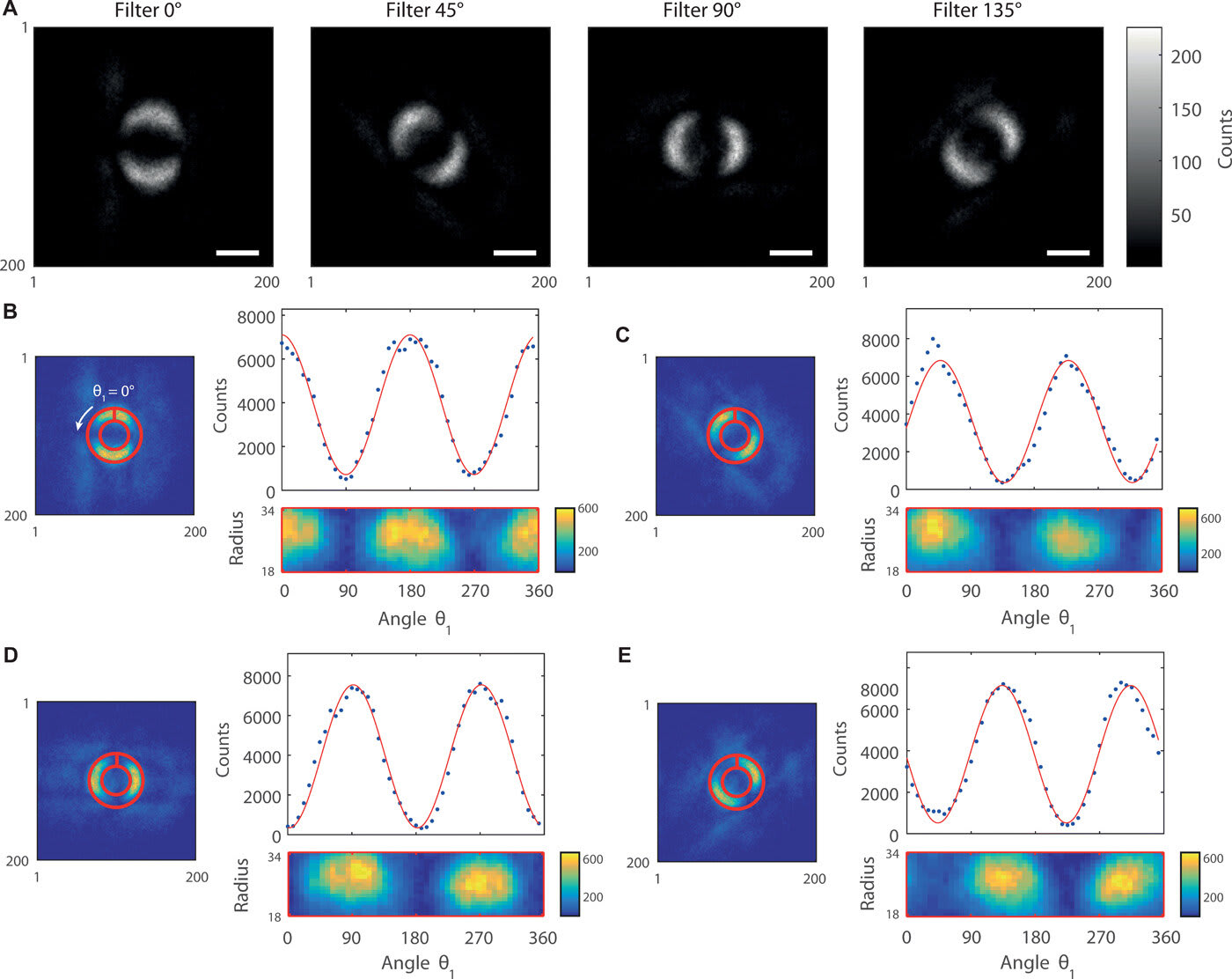

Visual representation of quantum entanglement through filtered angular intensity patterns and correlation plots

Superposition is the quantum property that allows qubits to exist in multiple states simultaneously until they're measured. Think of it like a coin spinning in the air—while it's spinning, it's neither heads nor tails but exists in a probabilistic state of both. Only when it lands (or in quantum terms, when it's measured) does it collapse into a definite state. For qubits, this means they can encode exponentially more information than classical bits, enabling quantum computers to explore multiple solution paths simultaneously rather than testing them one by one.

Entanglement is perhaps the most mysterious and powerful quantum phenomenon. When qubits become entangled, they form a connection so intimate that the state of one qubit instantly influences the state of others, regardless of the distance separating them. Albert Einstein famously called this "spooky action at a distance," and it's what enables qubits to work together in extraordinarily coordinated ways. This quantum correlation allows quantum computers to perform complex computations by having multiple qubits share information and influence each other's states instantaneously, creating computational capabilities that are fundamentally impossible in classical systems.

Interference is the quantum mechanism that amplifies correct answers while canceling out wrong ones. Quantum algorithms are designed to cause interference patterns that constructively reinforce the probability amplitudes of correct solutions while destructively interfering with incorrect ones. This process is how quantum computers navigate the vast landscape of possibilities enabled by superposition and entanglement, ultimately arriving at the most likely correct answer. Think of it as having many quantum paths exploring a maze simultaneously, with the wrong paths canceling each other out while the correct path becomes stronger and more likely to be measured as the final answer.

Building a Quantum Computer: The Engineering Marvel Behind the Qubits

Creating and maintaining qubits is one of the most challenging engineering feats in modern technology. Unlike classical computer chips that can operate at room temperature, most quantum computers require extreme conditions that push the boundaries of what's physically possible.

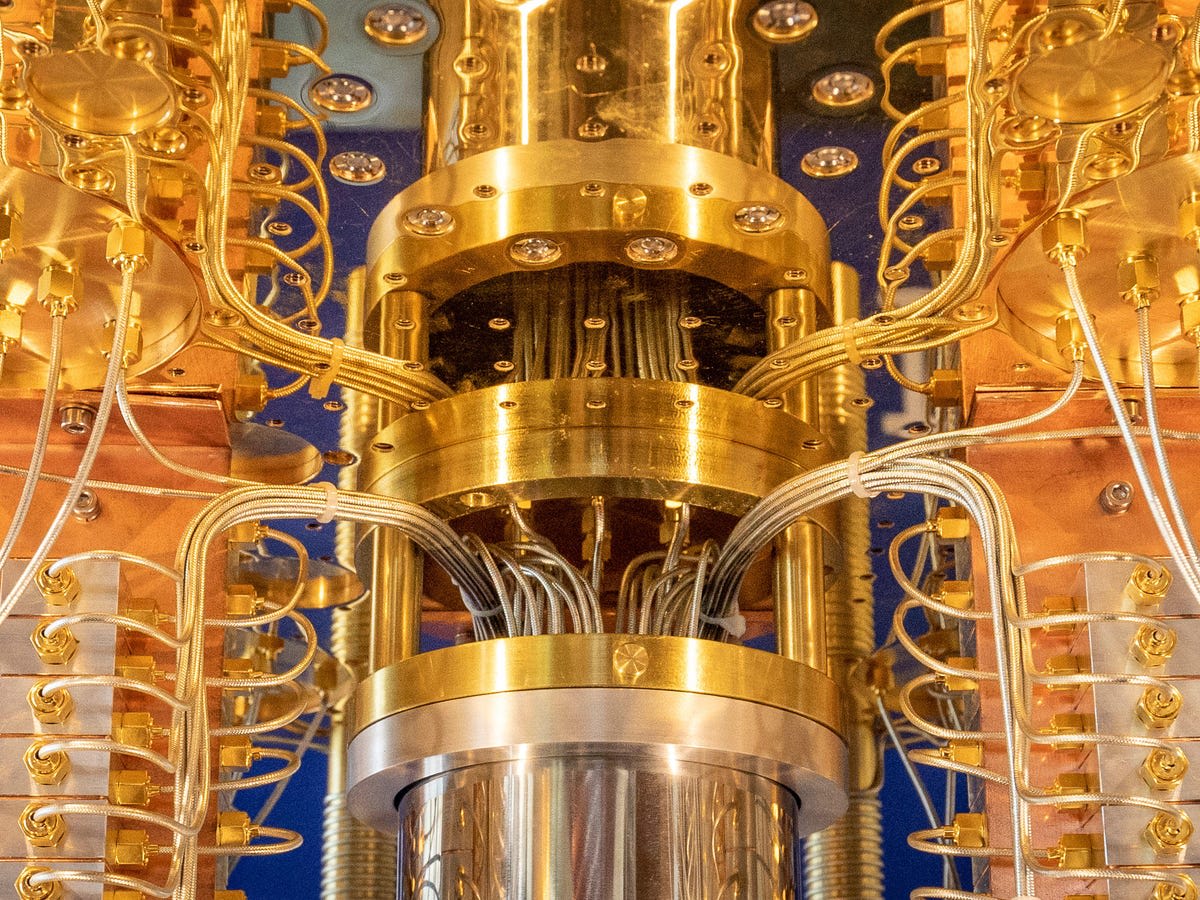

IBM's advanced quantum computing hardware showcasing the intricate quantum processor and wiring components

The most common approach to building quantum computers today uses superconducting qubits, the technology employed by industry leaders like IBM and Google. These qubits are created using superconducting circuits that must be cooled to temperatures near absolute zero—about -273 degrees Celsius or 15 millikelvin, which is colder than outer space. At these extraordinarily low temperatures, certain metals become superconductors, allowing electrical current to flow without resistance and creating the conditions necessary for quantum behavior at a macroscopic scale.

Superconducting quantum computers rely on a critical component called a Josephson junction, which consists of a thin insulating layer sandwiched between two superconductors. This junction allows quantum tunneling, a purely quantum phenomenon where electrons seemingly teleport through barriers that classical physics says should be impenetrable. These Josephson junctions create the nonlinear behavior necessary to define distinct quantum energy levels that can be used to encode information, essentially turning electrical circuits into artificial atoms with controllable quantum properties.

The physical infrastructure required to support quantum computers is staggering. Massive dilution refrigerators, often several feet tall and filled with intricate gold-plated components and precision wiring, provide the extreme cooling necessary to maintain quantum states. These refrigerators use a complex cascading system of cooling stages, progressively reducing temperature through multiple levels until reaching the millikelvin regime where quantum processors can operate. The quantum processor itself, or quantum processing unit (QPU), sits at the coldest point of this system, carefully isolated from environmental vibrations, electromagnetic interference, and thermal fluctuations that could destroy the delicate quantum states.

Alternative approaches to building qubits are also being actively pursued. Trapped-ion quantum computers use electromagnetic fields to hold individual ions in place and manipulate them with precisely tuned lasers, offering extremely high coherence times and gate fidelities but facing challenges in scalability. Photonic quantum computers encode quantum information in the properties of light particles, operating at room temperature and offering advantages for quantum communication, though implementing logic gates remains challenging. Topological qubits, still largely theoretical, promise inherent protection against certain types of errors by encoding information in the global properties of quantum systems rather than in individual particles.

The Quantum Computing Industry: Tech Giants and Ambitious Startups

The quantum computing landscape in 2025 is characterized by intense competition and unprecedented investment. The global quantum computing market has reached between $1.8 billion and $3.5 billion in 2025, with projections suggesting explosive growth to $20.2 billion by 2030, representing a compound annual growth rate exceeding 40 percent. This makes quantum computing one of the fastest-growing technology sectors of the decade, attracting venture capital, government funding, and strategic investments from major corporations worldwide.

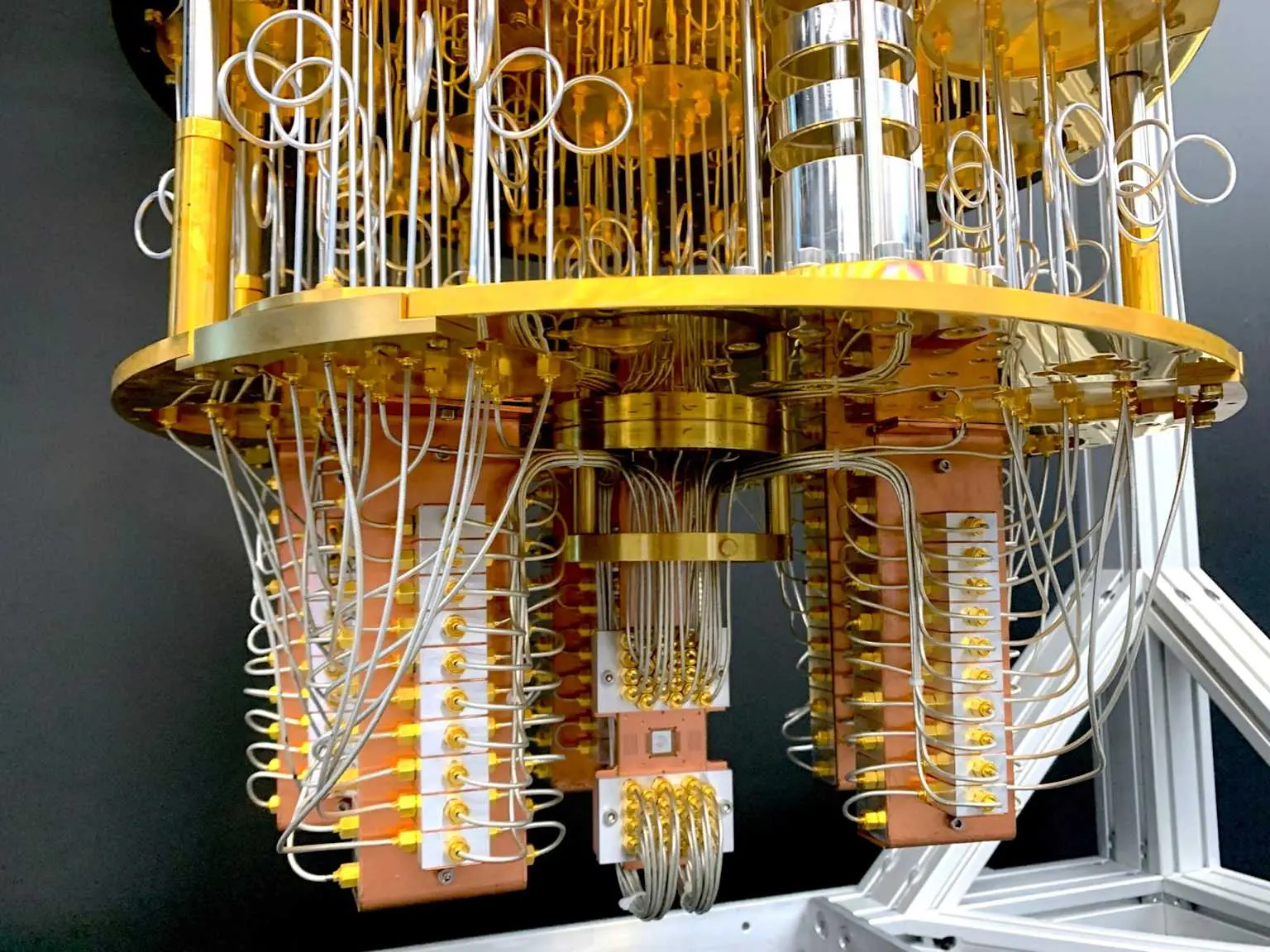

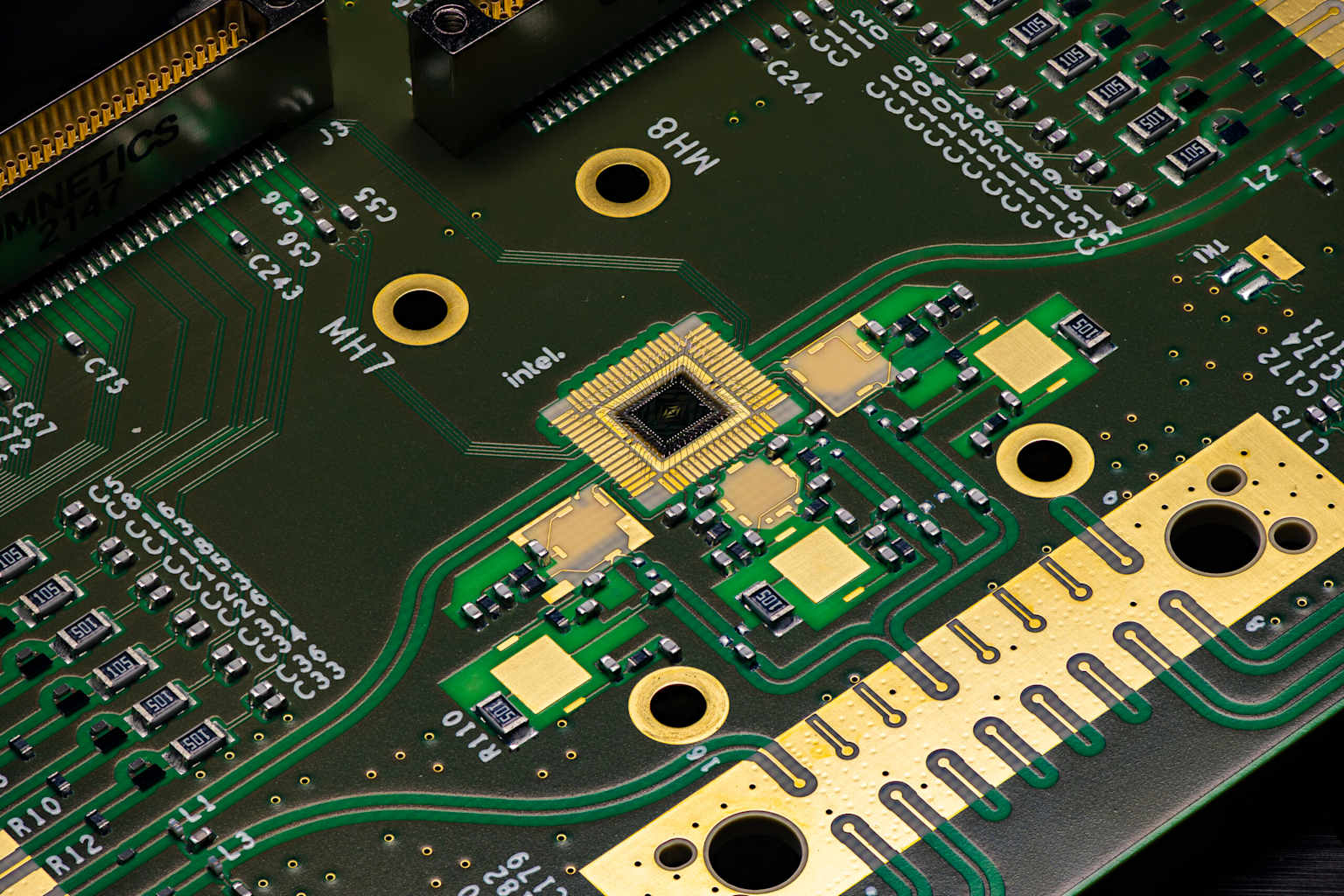

Close-up of IBM quantum computer hardware showcasing intricate wiring and quantum processor components

IBM stands as a pioneering force in quantum computing, with a century-long history of technological innovation now focused on achieving quantum advantage. In November 2025, IBM unveiled its Quantum Nighthawk processor, featuring 120 qubits with enhanced connectivity through 218 next-generation tunable couplers. This architecture enables circuits with 30 percent more complexity than previous generations while maintaining low error rates, allowing users to execute up to 5,000 two-qubit gates in a single computation. IBM's ambitious roadmap extends through 2033, targeting quantum-centric supercomputers with 100,000 qubits utilizing quantum low-density parity-check codes that reduce overhead by approximately 90 percent.

Google Quantum AI has made headlines with breakthrough demonstrations and hardware innovations. The company's Willow chip, featuring 105 qubits, achieved a landmark milestone in quantum error correction by demonstrating that increasing qubit numbers can actually reduce errors—validating a fundamental approach necessary for large-scale quantum computing. In benchmark testing, Willow completed calculations in minutes that would require the fastest classical supercomputer an astronomical timeframe, exemplifying the exponential advantage quantum systems hold over classical methods. Google's claim of achieving quantum supremacy in 2019 with their Sycamore processor, which performed a calculation in 200 seconds that researchers estimated would take classical supercomputers 10,000 years, sparked both celebration and controversy in the quantum community.

Microsoft, Amazon Web Services, and Intel represent additional major players pursuing different technological approaches and business models. Microsoft has partnered with Quantinuum to advance logical qubit development, recently demonstrating the entanglement of 12 logical qubits with dramatically reduced error rates. Amazon has integrated quantum computing into its cloud services through Amazon Braket, allowing customers to experiment with quantum algorithms without massive capital investments in quantum hardware. Intel focuses on spin qubits implemented in silicon, leveraging its semiconductor manufacturing expertise to pursue a potentially more scalable path.

Close-up of Intel's quantum computing processor chip on a circuit board showing detailed circuitry and components

Smaller specialized companies are also making significant contributions. IonQ utilizes trapped-ion technology and in March 2025 achieved one of the first documented cases of quantum computing delivering practical advantage over classical methods in a real-world medical device simulation. SpinQ is democratizing quantum access with compact quantum computers and Quantum-as-a-Service platforms. Rigetti Computing has introduced hybrid quantum-classical systems particularly suited for optimization and machine learning applications. These diverse approaches reflect the reality that multiple quantum computing modalities may ultimately coexist, each optimized for different problem classes.

Real-World Applications: Where Quantum Computing Is Making an Impact Today

While fully fault-tolerant, universal quantum computers remain years away, near-term quantum systems are already demonstrating practical value across multiple industries, particularly in the United States where early adoption is accelerating.

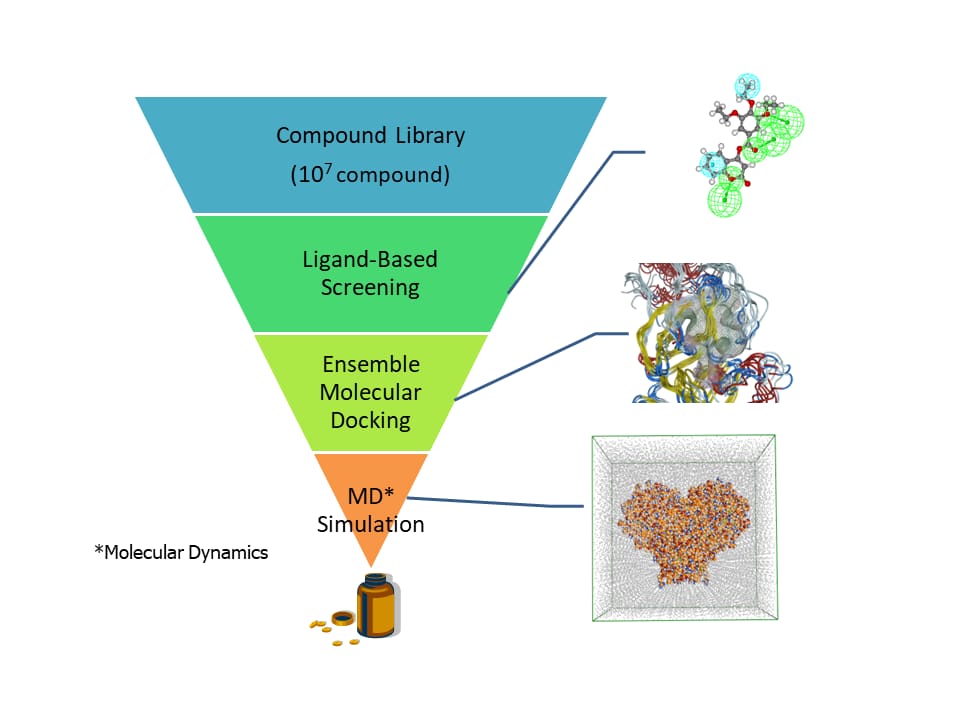

Funnel diagram illustrating stages of drug discovery using molecular simulation methods, from compound library screening to molecular dynamics simulation

In pharmaceutical research and drug discovery, quantum computing is revolutionizing how researchers identify and develop new medications. Pfizer has partnered with IBM's Quantum Network to leverage quantum molecular modeling in searching for new antibiotics and antivirals, addressing one of healthcare's most pressing challenges as antibiotic resistance grows. Cleveland Clinic has collaborated with IBM on cancer research, using quantum computers to model protein-protein interactions—complex molecular behaviors that classical computers struggle to simulate accurately. The fundamental challenge in drug discovery is predicting how molecules will interact inside the human body, a task that quickly overwhelms classical computational approaches due to the quantum mechanical nature of molecular bonds and electron behavior. Quantum computers can simulate these molecular structures and interactions at an atomic level with controllable accuracy, potentially reducing the drug development timeline from over a decade to just a few years while significantly lowering costs.

Financial services represent another sector where quantum computing is delivering measurable benefits. JPMorgan Chase and other major financial institutions are using quantum optimization algorithms to improve portfolio management, cutting problem complexity by up to 80 percent and enhancing risk analysis accuracy. Risk assessment in finance requires simulating numerous market scenarios across multiple timeframes—a computationally intensive task where quantum algorithms can provide quadratic speed-ups through improved data sampling methods. LongYingZhiDa Fintech, a subsidiary of China's Huaxia Bank, developed a quantum neural network that analyzed data from over 2,200 ATMs and achieved 99 percent accuracy in predicting optimal asset reallocation, outperforming traditional algorithms in both speed and precision.

Supply chain optimization has become a proving ground for practical quantum applications, with companies like DHL using quantum algorithms to reduce international shipping delivery times by 20 percent. Amazon Web Services is developing quantum-powered warehouse management systems to optimize global inventory distribution, while ExxonMobil collaborates with IBM on quantum solutions for optimizing maritime inventory routes for liquefied natural gas shipping. Volkswagen has implemented quantum-based traffic management systems in several cities to reduce congestion and carbon emissions. These optimization problems—determining the best routes among millions of possibilities while balancing constraints like fuel costs, delivery windows, and vehicle capacity—are precisely the type of combinatorial challenges where quantum algorithms can explore multiple options simultaneously, finding superior solutions faster than classical approaches.

Weather forecasting and climate modeling benefit from quantum computing's ability to process the massive computational demands of atmospheric simulations. IBM partnered with The Weather Company, UCAR, and NCAR to develop quantum-enhanced weather models offering five times greater resolution than traditional systems. Pasqal and BASF created quantum algorithms for complex differential equations using near-term processors, crucial for climate simulations that require petaflop-scale computing power. Quantum-enhanced systems can reduce energy consumption by up to 90 percent compared to conventional exascale supercomputers that consume 20-40 megawatts of power, while simultaneously improving forecast accuracy through better modeling of nonlinear atmospheric interactions.

The Quantum Threat to Cybersecurity: A Looming Challenge for Digital Security

While quantum computing promises tremendous benefits, it also poses an existential threat to the cryptographic foundations of modern digital security. The encryption algorithms protecting everything from online banking to government communications to blockchain transactions could become vulnerable once sufficiently powerful quantum computers emerge.

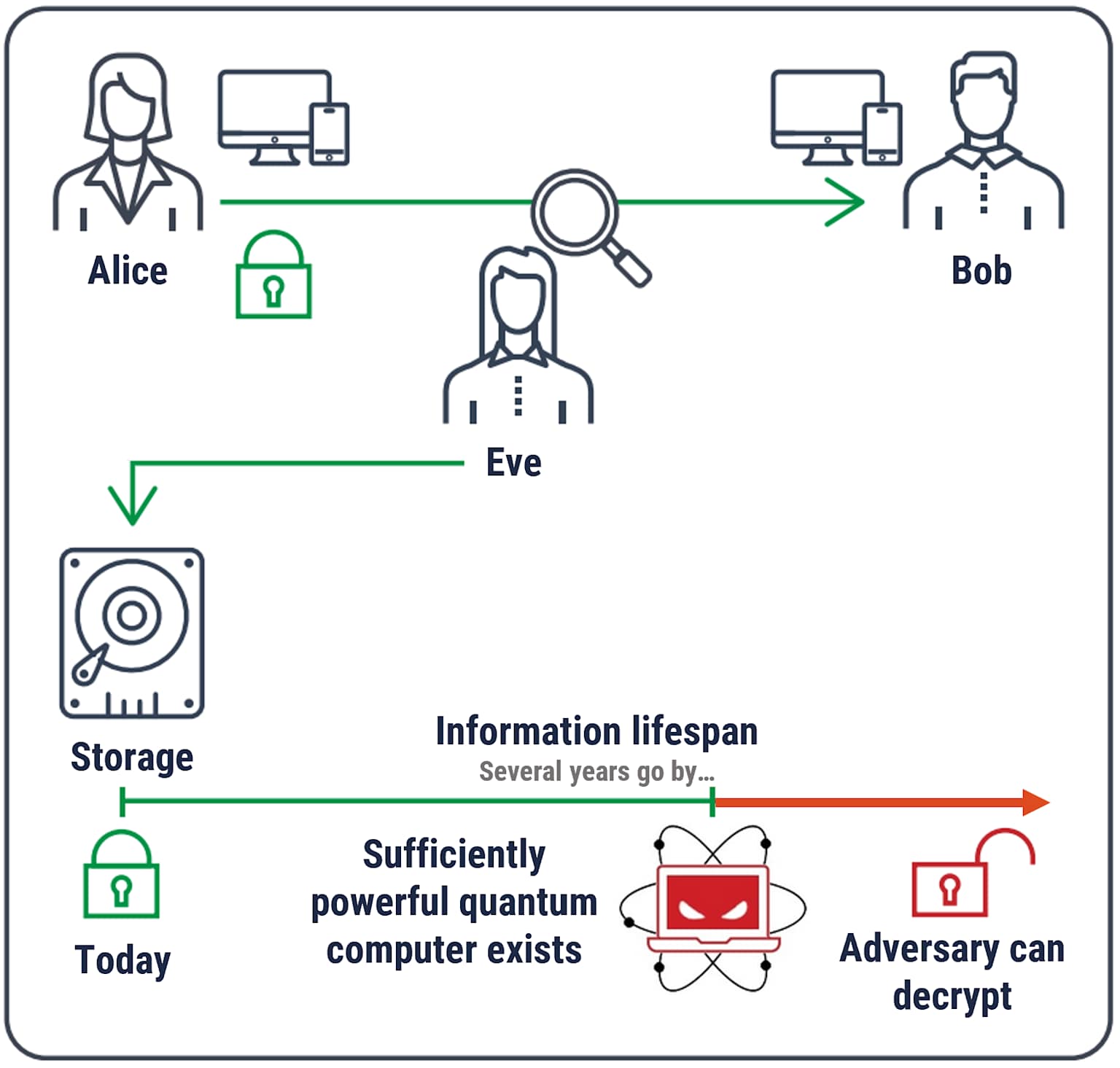

Illustration of quantum computing's potential threat to data encryption and network security over time, showing how a powerful quantum computer could decrypt encrypted information

Modern cybersecurity relies heavily on public-key cryptography, particularly RSA (Rivest-Shamir-Adleman) encryption and Elliptic Curve Cryptography (ECC). These systems secure the vast majority of internet traffic, including HTTPS web browsing, email communications, digital signatures, and virtual private networks. The security of these algorithms rests on mathematical problems that are extraordinarily difficult for classical computers to solve—specifically, factoring large numbers (for RSA) and solving discrete logarithm problems (for ECC). Breaking a 2048-bit RSA key using today's most powerful supercomputers would require computational times measured in millions or billions of years, making such encryption effectively unbreakable with current technology.

However, mathematician Peter Shor developed an algorithm in 1994 that fundamentally changed this calculus. Shor's algorithm allows quantum computers to factor large integers and solve discrete logarithm problems exponentially faster than any known classical algorithm. Where classical factoring algorithms require computational time that grows exponentially with the size of the number being factored, Shor's algorithm can accomplish the same task in polynomial time on a quantum computer. This means a sufficiently powerful quantum computer could break RSA-2048 encryption in hours or even minutes, compared to the billions of years required by classical approaches.

The timeline for when cryptographically relevant quantum computers (CRQCs) will emerge remains debated among experts. Conservative estimates suggest 15-20 years may be needed to build fully error-corrected quantum machines with the millions or billions of qubits required to break strong encryption. More optimistic projections, including recent analyses from IBM and Google, suggest that quantum computers capable of threatening widely used cryptographic systems could emerge by the early 2030s, potentially sooner. IBM's quantum computing roadmap predicts rapid scaling toward systems exceeding 1,000 qubits within the next few years, with several thousand qubits possible by 2035. At that scale, quantum computers would have better than 50 percent likelihood of breaking RSA-2048 and similar algorithms.

Grover's algorithm, developed by Lov Grover in 1996, presents a different but still significant threat to symmetric encryption like AES (Advanced Encryption Standard). While Grover's algorithm doesn't break symmetric cryptography outright, it provides a quadratic speedup for brute-force searching, effectively halving the security strength of encryption keys. This means AES-128, currently considered secure, would offer only 64 bits of effective security against quantum attacks—insufficient for protecting sensitive data. The mitigation is straightforward: doubling symmetric key lengths maintains equivalent security in the quantum era, making AES-256 the recommended standard for post-quantum security.

The threat extends beyond future encryption to data encrypted today through "harvest now, decrypt later" attacks. Sophisticated adversaries can collect encrypted communications now and store them until quantum computers become available to decrypt the contents. This makes the quantum threat to cryptography an immediate concern rather than a distant future problem, particularly for sensitive information that must remain confidential for decades. In response, the National Institute of Standards and Technology (NIST) has published post-quantum cryptography standards, and organizations worldwide are beginning to implement quantum-resistant algorithms and hybrid encryption schemes that combine classical and post-quantum approaches.

Quantum Algorithms: The Software Powering Quantum Advantage

The true power of quantum computing emerges not just from quantum hardware but from quantum algorithms—specialized computational procedures designed to exploit quantum mechanical properties to solve specific problems exponentially faster than classical approaches.

Shor's algorithm for integer factorization and discrete logarithm problems represents perhaps the most famous quantum algorithm, both for its elegant approach and its profound implications for cryptography. The algorithm works by transforming the factoring problem into a period-finding problem, which can be efficiently solved using quantum techniques. At its core, Shor's algorithm employs quantum phase estimation to perform the modular arithmetic needed to find the period of the number being factored, and the inverse Quantum Fourier Transform to convert quantum results into classical information that can be measured and interpreted. While implementing Shor's algorithm on current quantum hardware remains challenging due to the enormous number of high-fidelity qubits required, successful demonstrations on small numbers have validated the approach and motivated significant investment in quantum error correction.

Grover's algorithm addresses unstructured search problems, providing a quadratic speedup over classical search algorithms. Where a classical computer must search through N items one at a time, requiring O(N) steps on average, Grover's algorithm can find the target item in approximately √N steps. For searching a database with one million entries, a classical search might require 500,000 queries on average, while Grover's algorithm needs only about 1,000 quantum iterations. The algorithm works by preparing a uniform superposition of all possible states, then repeatedly applying a quantum operation that amplifies the amplitude of the target state while diminishing others, effectively rotating the quantum state toward the solution. Though the speedup is "only" quadratic rather than exponential, Grover's algorithm is remarkably general, applying to any problem that can be framed as searching for specific inputs that satisfy given criteria.

Beyond these foundational algorithms, specialized quantum algorithms are emerging for specific application domains. Variational Quantum Eigensolver (VQE) and Quantum Approximate Optimization Algorithm (QAOA) represent hybrid quantum-classical approaches particularly suited for near-term quantum hardware, combining quantum circuits to prepare trial states with classical optimization to iteratively improve solutions. Quantum machine learning algorithms are transitioning from theoretical interest to practical implementation, leveraging quantum systems' ability to efficiently represent high-dimensional data and explore complex feature spaces. These algorithms show particular promise for optimization problems in finance, logistics planning, and molecular simulation where multiple variables must be simultaneously considered.

Overcoming the Challenges: Error Correction and the Path to Fault Tolerance

Despite remarkable progress, quantum computing faces formidable technical challenges that must be overcome before large-scale, reliable quantum computers become practical realities. Chief among these is the extreme fragility of quantum states.

Qubits are extraordinarily sensitive to their environment. Any interaction with the outside world—thermal fluctuations, electromagnetic interference, cosmic rays, vibrations, or even stray photons—can cause decoherence, the process by which qubits lose their quantum properties and collapse into classical states. Most current qubits maintain coherence for only microseconds to milliseconds, severely limiting the complexity of calculations they can perform. IBM's superconducting qubits, for example, have coherence times around 100-200 microseconds, restricting the depth of quantum circuits that can be reliably executed. This decoherence problem explains why quantum computers must operate in such extreme conditions, isolated from environmental noise in dilution refrigerators cooled to near absolute zero.

Error rates compound the decoherence problem. Basic quantum operations like gate operations typically have error rates of 0.1-1 percent per gate, meaning that one out of every 100 to 1,000 quantum operations introduces an error. While this might seem small, complex quantum algorithms require millions or billions of operations, causing errors to accumulate rapidly and corrupt results. Unlike classical computers where errors are rare and can be managed through straightforward redundancy, quantum errors are more insidious because measuring a qubit to check for errors destroys its quantum state—a fundamental constraint imposed by quantum mechanics.

Quantum error correction provides a path forward by encoding logical qubits across multiple physical qubits using quantum entanglement and carefully designed codes. Surface codes, currently the most promising approach for scalable quantum computing, can tolerate relatively high error rates and are well-suited for two-dimensional qubit architectures. The basic idea is to spread quantum information across an entangled state of many physical qubits such that errors can be detected and corrected by measuring correlations between qubits without directly measuring the quantum information itself. However, this redundancy comes at a steep cost: creating a single logical qubit with sufficient error protection might require hundreds or even thousands of physical qubits.

Google's December 2024 announcement of below-threshold error correction with their Willow processor marked a watershed moment. The team demonstrated that as they increased the number of physical qubits used to encode logical qubits from 3x3 to 5x5 to 7x7 grids, the error rate of the logical qubits actually decreased—exponentially. This "below-threshold" performance is exactly what theoretical predictions said should happen but had never been experimentally demonstrated at this scale. It proves that the path to large-scale quantum computing through surface codes and error correction is viable, not just theoretical. Microsoft and Quantinuum achieved similar milestones, entangling 12 logical qubits with physical error rates of 0.024 percent reduced to logical error rates of 0.0011 percent.

The quantum computing community now targets fault-tolerant quantum computers—systems where errors can be suppressed faster than they occur, enabling arbitrarily long quantum computations. IBM's roadmap envisions the Quantum Starling system for 2029, featuring 200 logical qubits capable of executing 100 million error-corrected operations, extending to 1,000 logical qubits by the early 2030s. These milestones will require continued advances in qubit coherence times, gate fidelities, error correction codes, and control electronics.

The Future Landscape: What Quantum Computing Means for the Next Decade

As we look toward 2030 and beyond, quantum computing appears poised to transition from laboratory demonstrations to practical commercial applications that impact everyday American life in tangible ways.

Near-term predictions suggest that hybrid quantum-classical computing will become standard by 2030, with quantum processors serving as specialized accelerators for specific problem classes while classical computers handle other tasks. Quantum-as-a-Service platforms provided by IBM, Microsoft, AWS, and emerging providers are democratizing access, allowing organizations to experiment with quantum algorithms without massive capital investments in quantum hardware infrastructure. This cloud-based delivery model is accelerating commercial adoption across industries, enabling companies to identify valuable use cases and develop quantum expertise before fault-tolerant systems arrive.

The pharmaceutical industry stands to benefit tremendously, with quantum modeling potentially reducing drug discovery cycles from 10-15 years to under one year by 2035. Hospitals could adopt quantum-enabled personalized medicine platforms for patient-specific drug testing, while quantum simulations could design more effective catalysts for industrial processes, enhancing energy efficiency in chemical manufacturing. Financial services will increasingly rely on quantum optimization for portfolio management, risk analysis, and fraud detection, with quantum neural networks potentially achieving accuracy levels beyond classical machine learning.

In cybersecurity, the landscape will fundamentally transform as quantum threats materialize and post-quantum cryptography becomes standard. By 2029, global quantum key distribution backbones may integrate with financial networks to secure international transactions. Governments are likely to deploy QKD-secured satellite networks protecting military and diplomatic communications. Organizations across sectors must begin transitioning to quantum-resistant encryption now, implementing hybrid classical-quantum cryptographic schemes that provide backward compatibility while protecting against future quantum attacks.

The job market will evolve dramatically, with analysts estimating that 250,000 quantum computing jobs will be needed by 2030. Universities are expanding quantum engineering programs, while workforce development initiatives aim to build expertise in quantum algorithms, cryogenic systems, photonics, and error correction. The convergence of quantum computing with artificial intelligence promises particularly transformative impacts, with quantum-enhanced AI potentially revolutionizing optimization, drug discovery, climate modeling, and machine learning on complex datasets.

Environmental applications could accelerate renewable energy adoption. Quantum algorithms will optimize electrical grids in real time, accommodating distributed renewable energy sources and managing demand from electric vehicles and data centers. Quantum modeling may design new superconductors and battery materials for cleaner energy storage. Quantum sensors could measure CO2 emissions with parts-per-billion precision while simulations aid in designing direct air capture systems.

Conclusion: Embracing the Quantum Revolution

Quantum computing represents more than just faster processors or more powerful algorithms—it embodies a fundamental reimagining of computation itself, one that harnesses the strange and counterintuitive properties of quantum mechanics to solve problems beyond the reach of any classical computer. For Americans navigating an increasingly complex technological landscape, understanding quantum computing has shifted from academic curiosity to practical necessity.

The technology stands at a genuine inflection point in 2025. Fundamental barriers that many researchers considered insurmountable—quantum error correction, scalability, demonstrating practical advantage—are being systematically addressed through coordinated innovation across hardware, software, and algorithms. Investment capital, government support, and demonstrated breakthroughs have created a robust ecosystem supporting commercial quantum development. While significant challenges remain, the trajectory suggests meaningful applications will emerge within the next five to ten years for specific problem classes spanning drug discovery, materials science, financial optimization, and cryptography.

The quantum revolution won't happen overnight, and quantum computers won't replace classical machines for everyday tasks like browsing the web or editing documents. Instead, quantum computing will complement classical systems, serving as specialized tools for problems where quantum mechanics provides inherent advantages. As this technology matures, it will touch virtually every aspect of modern life—from the medicines we take to the security of our financial transactions, from weather forecasts that save lives to materials that enable cleaner energy.

For businesses, researchers, policymakers, and citizens, the time to engage with quantum computing is now. Whether preparing for quantum threats to encryption, exploring quantum solutions to optimization challenges, or simply building quantum literacy to understand the changing technological landscape, taking action today positions us to thrive in the quantum future that's rapidly approaching. The quantum era is no longer a distant possibility—it's unfolding right now, and those who understand and embrace this revolutionary technology will shape the innovations that define the coming decades.